Hello guys, welcome back to my blog. In this article, I will discuss Computer Vision in ADAS, its use cases from lane keeping to collision avoidance, and key algorithms used.

Ask questions if you have any electrical, electronics, or computer science doubts. You can also catch me on Instagram – CS Electrical & Electronics

- OBD-II to UDS Migration: Benefits And Compatibility Issues

- CAN vs CAN-FD vs Ethernet: Choosing the Right Automotive Network

- Neuromorphic Computing In The Automotive: Driving The Future With Brain-Inspired Intelligence

Computer Vision In ADAS

As vehicles become smarter and safer, Advanced Driver Assistance Systems (ADAS) have taken center stage in modern automotive development. Among the many enabling technologies, Computer Vision plays a pivotal role in allowing vehicles to interpret and respond to their surroundings.

From simple lane-keeping alerts to complex collision avoidance maneuvers, computer vision enables the machine to “see,” understand, and act, making the driving experience safer for everyone.

What is Computer Vision?

Computer Vision is a field of artificial intelligence (AI) that enables computers to derive meaningful information from digital images or videos. It’s about replicating human vision using cameras, algorithms, and processing power.

In ADAS, it translates camera inputs into real-time actionable data, detecting lanes, vehicles, pedestrians, signs, and even driver behavior.

Role of Computer Vision in ADAS

ADAS systems rely on sensors like cameras, radars, and LiDAR to perceive the environment. While radar and LiDAR give depth and distance, vision systems interpret visual cues—markings, colors, signs, and moving objects.

Computer Vision systems can:

- Detect lanes, road edges, and obstacles

- Recognize signs and signals

- Monitor driver alertness

- Predict collision risks based on visual input

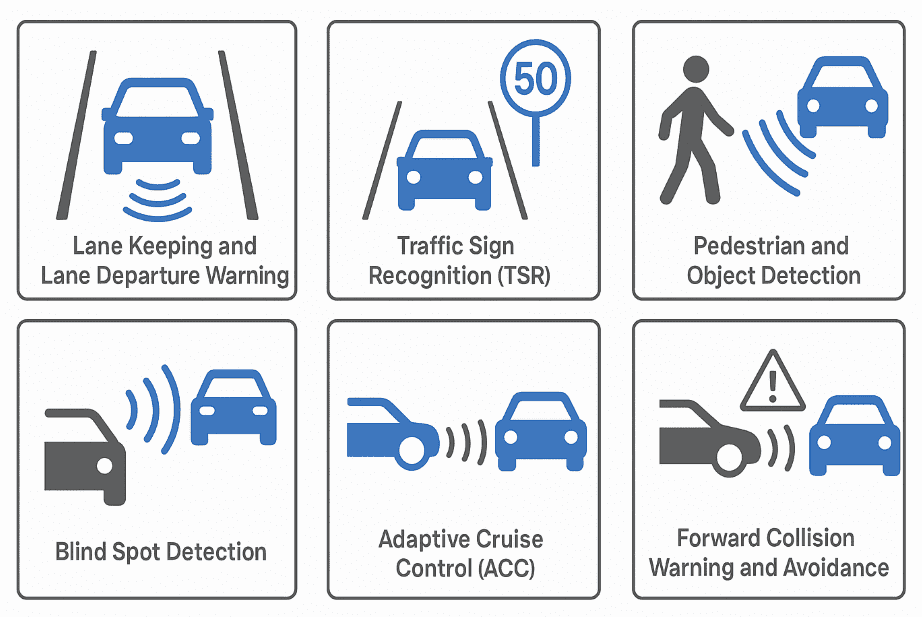

Core ADAS Features Powered by Computer Vision

01. Lane Keeping and Lane Departure Warning (LDW & LKAS): Vision-based systems detect lane markings on the road using edge detection and pattern recognition. If the vehicle drifts unintentionally, the system alerts the driver or gently steers the car back.

02. Traffic Sign Recognition (TSR): Through object classification techniques (CNNs), cameras can identify speed limits, stop signs, and warnings, overlaying them on the dashboard in real time.

03. Pedestrian and Object Detection: By using YOLO (You Only Look Once) and SSD (Single Shot MultiBox Detector) models, the system can detect humans, cyclists, and other obstacles to prevent collisions.

04. Blind Spot Detection: Cameras mounted on mirrors or fenders use computer vision to monitor areas not visible to the driver and alert during lane changes.

05. Adaptive Cruise Control (ACC): While radar measures the distance to the car ahead, computer vision helps recognize lane curvature, merging traffic, or slow/stopped vehicles.

06. Forward Collision Warning & Avoidance: Real-time object detection and tracking help the car estimate Time-To-Collision (TTC) and apply automatic emergency braking if needed.

07. Driver Monitoring Systems (DMS): Internal cameras analyze facial expressions and eye movements to detect fatigue or distraction, issuing alerts or controlling the vehicle when needed.

Key Algorithms and Techniques in Vision-Based ADAS

- Edge Detection (Canny, Sobel): For lane markings and road boundaries

- Object Detection (YOLO, SSD, Faster R-CNN)

- Semantic Segmentation (UNet, DeepLab): For understanding road scenes

- Optical Flow & SLAM: To analyze motion and self-localization

- 3D Reconstruction: When paired with stereo cameras

- Kalman & Particle Filters: For object tracking over time

Hardware Used in Vision-Based ADAS

Camera Types:

- Monocular Camera: Single lens for basic vision processing

- Stereo Camera: For depth perception

- Surround View Systems: Multiple cameras for 360° vision

Processing Units:

- SoCs (NVIDIA Drive, Mobileye EyeQ, Qualcomm Snapdragon Auto)

- Edge AI Accelerators

- FPGAs & ASICs: For low-latency image processing

Sensor Fusion: Enhancing Vision with Radar & LiDAR

While cameras are cost-effective, they struggle in poor lighting or weather. Hence, ADAS systems fuse camera data with:

- Radar: For precise distance and velocity

- LiDAR: For 3D mapping

- IMU & GPS: For localization

Sensor fusion combines their strengths for robust, accurate perception.

Challenges in Vision-Based ADAS Systems

- Lighting Variability: Vision fails in low-light or glare

- Weather Conditions: Rain, fog, or snow reduce visibility

- Computational Cost: Real-time processing needs powerful hardware

- Data Annotation: Training requires massive labeled datasets

- Ethical and Legal Issues: Decision-making in crash scenarios

Deep Learning in Computer Vision for ADAS

Traditional image processing techniques are being replaced by deep learning:

- Convolutional Neural Networks (CNNs) for object recognition

- Recurrent Neural Networks (RNNs) for temporal tracking

- Transformer models for attention-based visual understanding

These models achieve high accuracy but need significant compute and memory.

Edge AI and Real-Time Processing

ADAS requires real-time inference with low latency. Hence, Edge AI chips run models directly in the vehicle without relying on cloud servers.

Popular tools:

- TensorRT (NVIDIA)

- ONNX Runtime

- OpenVINO (Intel)

These tools optimize AI models to run faster on embedded devices.

Data Annotation and Model Training

A critical part of ADAS development is creating massive annotated datasets.

Sources:

- KITTI

- Cityscapes

- Waymo Open Dataset

Annotation categories:

- Lanes, vehicles, signs, pedestrians

- Weather conditions and occlusions

- Tools like Labelbox, CVAT, and SuperAnnotate are used in labeling.

Regulatory & Safety Standards

ADAS and computer vision components must comply with:

- ISO 26262: Functional Safety of Automotive Systems

- UNECE Regulation 152: For AEBS (Autonomous Emergency Braking)

- NCAP Ratings: Encouraging adoption of ADAS features

Validation and verification of vision systems are crucial for safety certification.

Future Trends: Toward Full Autonomy

Vision systems are evolving toward full automation (L4 & L5), with trends such as:

- End-to-End Learning: Replacing rule-based logic with deep policies

- Self-Supervised Learning: Learning from unannotated data

- Event Cameras: Capturing motion with ultra-low latency

- Neural Radiance Fields (NeRF): For realistic 3D scene understanding

As costs drop and algorithms improve, camera-based autonomy will become mainstream.

Conclusion

Computer vision has transformed modern vehicles from passive machines into active, intelligent drivers. It is the eyes and the brain behind many ADAS features—from staying in lanes to avoiding deadly collisions.

While challenges remain, rapid advancements in AI, edge computing, and sensor fusion are pushing the boundaries of what’s possible. As we steer toward a future of autonomous driving, vision-based ADAS will remain at the core, ensuring safety, efficiency, and intelligence on the roads.

This was about “Computer Vision In ADAS: From Lane Keeping To Collision Avoidance“. Thank you for reading.

Also, read:

- “Mother of All Deals”: How The EU–India Free Trade Agreement Can Reshape India’s Economic Future

- 10 Free ADAS Projects With Source Code And Documentation – Learn & Build Today

- 100 (AI) Artificial Intelligence Applications In The Automotive Industry

- 1000+ Automotive Interview Questions With Answers

- 2024 Is About To End, Let’s Recall Electric Vehicles Launched In 2024

- 2026 Hackathons That Can Change Your Tech Career Forever

- 50 Advanced Level Interview Questions On CAPL Scripting

- 7 Ways EV Batteries Stay Safe From Thermal Runaway