Hello guys, welcome back to my blog! 📸✨

Today, we’re diving into one of the most fascinating and game-changing innovations in the world of computer vision and artificial intelligence — Event-Based Cameras.

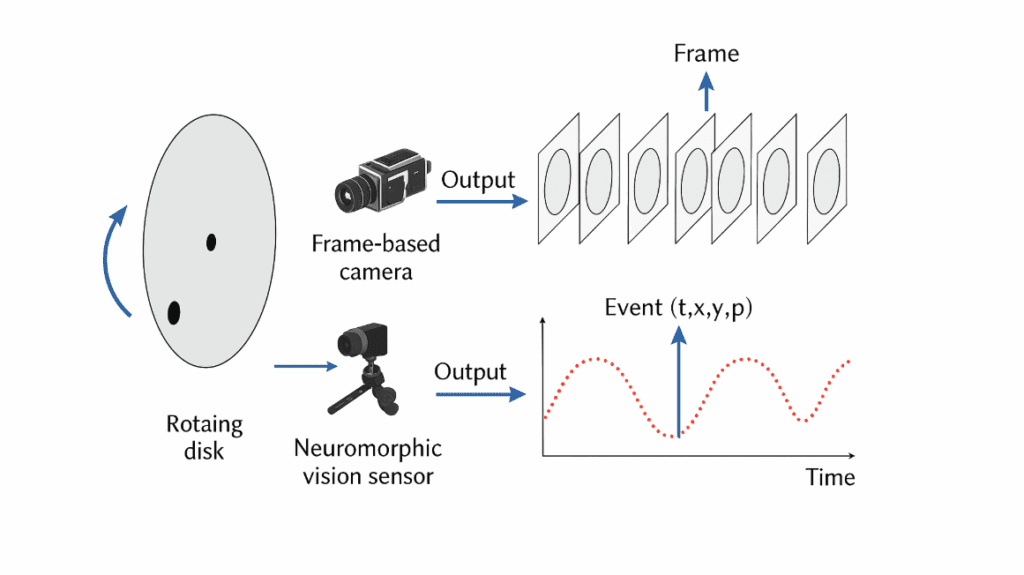

For decades, we’ve relied on traditional cameras — the kind that capture images frame by frame — to power everything from smartphones and CCTV systems to advanced robotics and autonomous cars. These cameras have served us incredibly well, giving machines the ability to “see” the world in pixels and motion.

But as technology pushes the limits of speed, accuracy, and intelligence, traditional cameras are starting to show their age. Imagine trying to record a hummingbird flapping its wings or tracking lightning in real time — a normal camera captures it as a blur. That’s because it works by taking snapshots at fixed intervals, missing out on the micro-moments in between.

Now, picture a camera that doesn’t take pictures at all. Instead, it reacts instantly — pixel by pixel — every time something changes in brightness. No waiting for frames. No wasted data. Just pure, continuous vision. ⚡

That’s exactly what an Event-Based Camera does — a revolutionary sensor inspired by how the human eye and brain work together. It doesn’t just see the world; it perceives it, reacting instantly to motion, light, and change.

Whether it’s powering autonomous vehicles, drones, robotics, or neuroscience-inspired AI systems, event-based cameras are opening the door to a completely new way of visualizing reality — one that’s faster, smarter, and far more efficient.

In this article, we’re going to break down:

- How a normal camera actually works under the hood.

- How an event-based camera flips the whole concept of imaging on its head.

- Their differences, advantages, challenges, and future applications.

- And finally, why event-based vision could be the missing piece for next-gen AI and robotics.

So, if you’ve ever been curious about how machines “see” — and how we’re teaching them to see more like us — stick around till the end. This is going to be a fascinating deep dive into the battle of vision technologies: Normal Camera vs Event-Based Camera. 🚀

Ask questions if you have any electrical, electronics, or computer science doubts. You can also catch me on Instagram – CS Electrical & Electronics.

- Top 10 FPGA Boards In The Market — And Which Are Best For AI

- Top 10 Applications Of FPGA In The Automotive Industry: Driving The Future Of Smart Mobility

- AI Libraries And Models Driving The Future Of Automotive And Semiconductor Industries

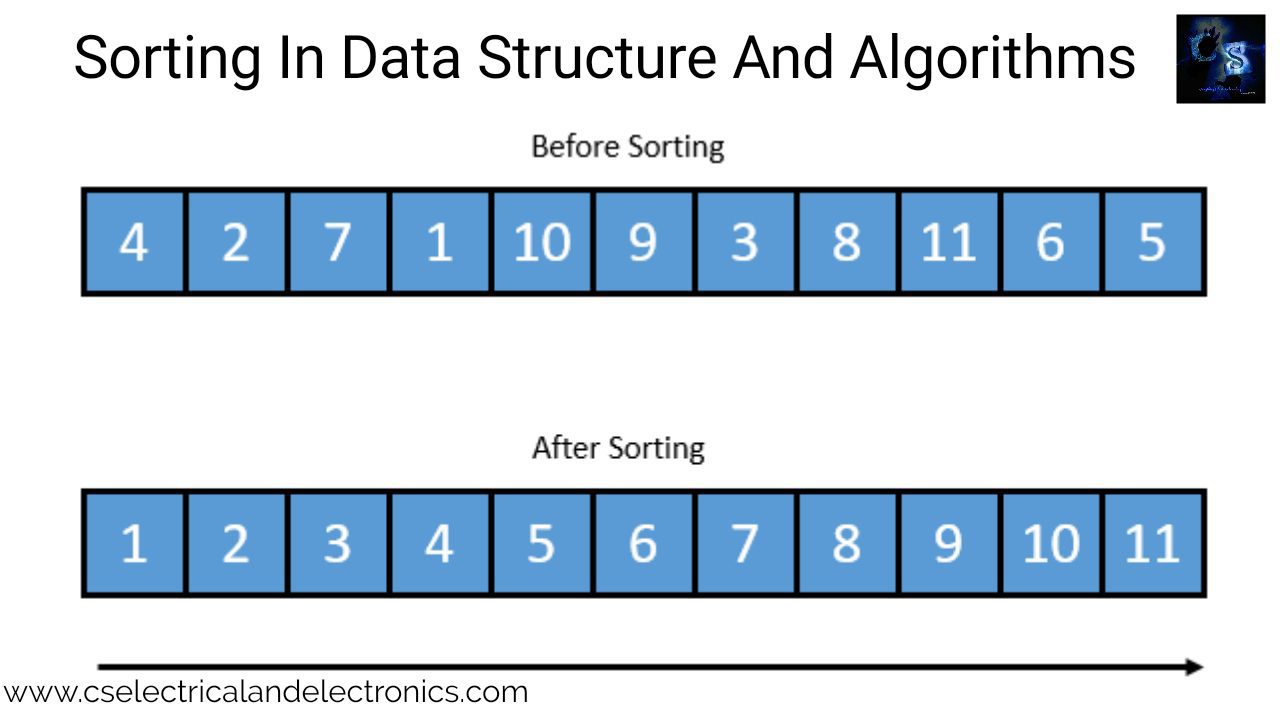

Normal Camera Vs Event-Based Camera

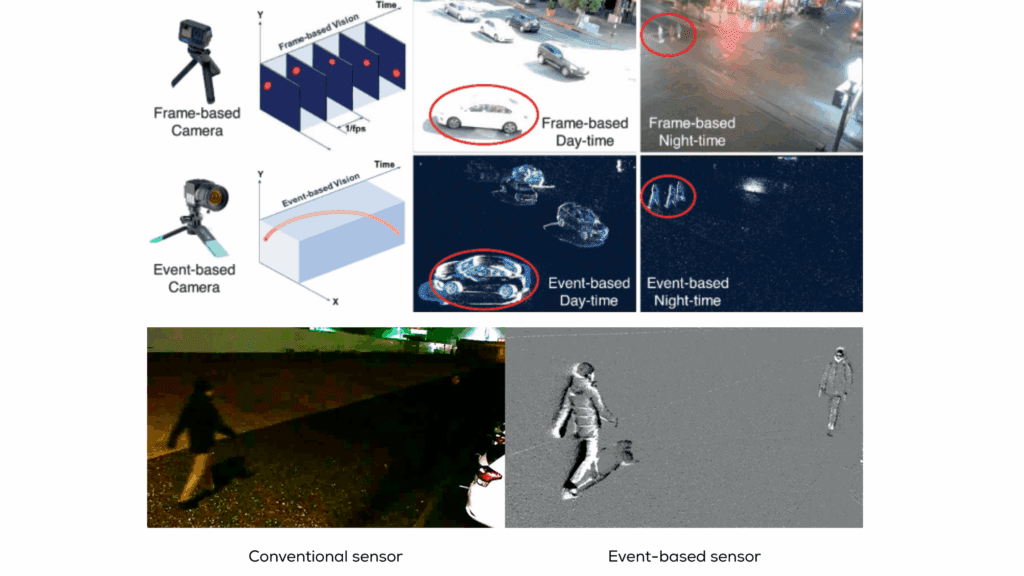

In the realm of computer vision, the evolution of imaging technologies has been pivotal in advancing various applications, from autonomous vehicles to robotics and augmented reality. Traditional cameras, which capture frames at fixed intervals, have served as the cornerstone of visual perception systems. However, they exhibit limitations in scenarios involving rapid motion or challenging lighting conditions. Enter event-based cameras, a paradigm shift in visual sensing that promises to overcome these constraints.

Event-based cameras, also known as Dynamic Vision Sensors (DVS), operate on a fundamentally different principle. Instead of capturing frames at regular intervals, they detect changes in the scene at the level of individual pixels, operating asynchronously. This approach offers significant advantages in terms of temporal resolution, dynamic range, and power efficiency.

This article delves into the differences between normal and event-based cameras, exploring their operational mechanisms, advantages, limitations, and applications. By understanding these distinctions, we can appreciate how event-based cameras are poised to revolutionize vision systems in dynamic environments.

How Normal Cameras Work

1.1 Basic Operation

Normal cameras, or frame-based cameras, function by capturing a series of images (frames) at fixed intervals, typically measured in frames per second (fps). Each frame represents a snapshot of the scene at a particular moment in time. The camera’s sensor, usually a Charge-Coupled Device (CCD) or Complementary Metal-Oxide-Semiconductor (CMOS) sensor, converts light into electrical signals, which are then processed to form an image.

1.2 Frame Rate and Exposure

The frame rate determines how many frames the camera captures per second. Common frame rates include:

- 24 fps: Standard for cinematic video.

- 30 fps: Common in television broadcasts.

- 60 fps: Used for high-definition video and gaming.

Exposure time refers to the duration for which the camera’s sensor is exposed to light. Longer exposure times allow more light to hit the sensor, resulting in brighter images, but can lead to motion blur if the subject moves during exposure.

1.3 Limitations

While traditional cameras are suitable for many applications, they have notable limitations:

- Motion Blur: Fast-moving objects can appear blurred due to the time it takes to capture a frame.

- Latency: The time between capturing frames can result in delayed response to dynamic changes in the scene.

- Dynamic Range: Limited ability to capture both very bright and very dark areas simultaneously.

- Power Consumption: Continuous operation at high frame rates can lead to significant power usage.

How Event-Based Cameras Work

2.1 Asynchronous Operation

Event-based cameras operate asynchronously, meaning each pixel functions independently. Instead of capturing frames at fixed intervals, each pixel detects changes in light intensity and generates an event when a change exceeds a certain threshold. These events include the pixel’s coordinates, the timestamp of the change, and the polarity (increase or decrease in brightness).

2.2 High Temporal Resolution

Event-based cameras offer extremely high temporal resolution, with latency as low as microseconds. This capability allows them to capture rapid movements and transient events that traditional cameras might miss.

2.3 High Dynamic Range

Due to their asynchronous nature, event-based cameras can handle a wide range of lighting conditions. They can simultaneously capture very bright and very dark areas of a scene without overexposure or underexposure, providing a high dynamic range (HDR).

2.4 Low Power Consumption

Since event-based cameras only process changes in the scene, they consume significantly less power than traditional cameras operating at high frame rates. This efficiency makes them suitable for battery-powered applications.

Frame-Based vs Event-Based: The Core Difference

| Feature | Normal Camera | Event-Based Camera |

|---|---|---|

| Operation | Captures full frames at fixed intervals | Captures pixel-wise changes asynchronously |

| Data Output | Series of frames (images) | Stream of events (x, y, polarity, timestamp) |

| Temporal Resolution | Limited by frame rate (e.g., 30–120 fps) | Microsecond-level resolution |

| Latency | High (depends on frame timing) | Ultra-low (sub-millisecond) |

| Motion Blur | Common at high speed | Negligible or none |

| Dynamic Range | ~60–70 dB | >120 dB |

| Power Consumption | High (processes all pixels) | Low (only active pixels transmit) |

| Data Volume | Large (even for static scenes) | Small (only changes transmitted) |

| Ideal Use Case | Static or moderate motion | High-speed or high dynamic range environments |

Advantages of Event-Based Cameras

⚡ 1. Ultra-fast response

Event cameras react in microseconds — much faster than any traditional frame-based camera.

This allows real-time tracking and control in robotics and automation.

🌗 2. High dynamic range

They can handle scenes with both bright and dark areas simultaneously (e.g., a tunnel exit).

Dynamic range often exceeds 120 dB, compared to ~70 dB for normal cameras.

🔋 3. Low power consumption

Only active pixels send data, drastically reducing energy consumption — ideal for IoT, drones, and embedded systems.

💾 4. Reduced data volume

Instead of 30 full frames per second, only changes are transmitted.

This results in compact data, making it easier to process and transmit in real time.

🚫 5. No motion blur

Because pixels react independently and immediately, moving objects remain sharp even at high speeds.

🧠 6. Neuromorphic compatibility

Event-based output aligns perfectly with spiking neural networks (SNNs) — mimicking how biological systems process visual information.

Technical Comparison

| Feature | Normal Camera | Event-Based Camera |

|---|---|---|

| Operation | Frame-based (captures full frames) | Asynchronous (captures changes per pixel) |

| Temporal Resolution | Limited by frame rate | Microsecond-level |

| Dynamic Range | Moderate | High |

| Power Consumption | High (due to continuous operation) | Low (only active pixels processed) |

| Motion Blur | Present | Virtually eliminated |

| Latency | Higher | Lower |

Applications

3.1 Autonomous Vehicles

In autonomous driving, rapid response to dynamic changes in the environment is crucial. Event-based cameras can detect fast-moving objects, such as pedestrians or other vehicles, with minimal latency, enhancing the vehicle’s ability to make timely decisions.

3.2 Robotics

Robotic systems, especially those operating in dynamic environments, benefit from the high temporal resolution of event-based cameras. These cameras enable precise motion tracking and interaction with rapidly changing scenes.

3.3 Augmented Reality (AR) and Virtual Reality (VR)

For immersive AR and VR experiences, real-time interaction with the environment is essential. Event-based cameras provide the necessary responsiveness to changes in the user’s movements and surroundings.

3.4 Industrial Automation

In manufacturing and quality control, detecting fast-moving components or subtle defects requires high-speed imaging. Event-based cameras can capture these details without motion blur, improving inspection accuracy.

3.5 Surveillance and Security

Event-based cameras can enhance surveillance systems by providing clear images of fast-moving subjects, even in low-light conditions, improving security monitoring effectiveness.

Advantages of Event-Based Cameras

- High Temporal Resolution: Capable of capturing rapid movements with minimal latency.

- High Dynamic Range: Can simultaneously capture bright and dark areas without overexposure or underexposure.

- Low Power Consumption: Only processes changes in the scene, leading to significant power savings.

- No Motion Blur: Eliminates blur associated with fast-moving objects.

- Asynchronous Operation: Each pixel operates independently, allowing for efficient data processing.

Challenges and Limitations

Despite their advantages, event-based cameras face several challenges:

- Data Processing Complexity: The asynchronous nature of the data requires specialized algorithms for processing and interpretation.

- Limited Spatial Resolution: Current event-based cameras may have lower spatial resolution compared to traditional cameras.

- Cost: Event-based cameras are generally more expensive due to their advanced technology.

- Integration with Existing Systems: Incorporating event-based cameras into existing infrastructures may require significant modifications.

Future Trends

The field of event-based vision is rapidly evolving. Future developments may include:

- Improved Spatial Resolution: Advances in sensor technology may lead to higher resolution event-based cameras.

- Integration with Machine Learning: Combining event-based cameras with machine learning algorithms can enhance object detection and classification.

- Cost Reduction: As the technology matures, the cost of event-based cameras is expected to decrease, making them more accessible.

- Wider Adoption: Increased awareness and understanding of the benefits of event-based cameras may lead to broader adoption across various industries.

Conclusion

Event-based cameras represent a significant advancement in visual sensing technology. By capturing changes in the scene at the pixel level with high temporal resolution, they offer advantages over traditional frame-based cameras in dynamic environments. While challenges remain, ongoing research and development are likely to address these issues, paving the way for broader adoption and new applications.

Understanding the distinctions between normal and event-based cameras is crucial for selecting the appropriate technology for specific applications. As the field progresses, event-based cameras are poised to play a pivotal role in the future of vision systems.

This was about “Normal Camera Vs Event-Based Camera: Understanding the Future of Vision Systems“. Thank you for reading.

Also, read:

- India’s GaN Chip Breakthrough: Why Gallium Nitride Could Shape the Future of Defense Electronics

- India’s Chip Era Begins: A New Chapter in Semiconductor Manufacturing

- FlexRay Protocol – Deep Visual Technical Guide

- Top 50 AI-Based Projects for Electronics Engineers

- India AI Impact Summit 2026: The Shift from AI Hype to AI Utility

- Python Isn’t Running Your AI — C++ and CUDA Are!

- UDS (Unified Diagnostic Services) — Deep Visual Technical Guide

- Automotive Ethernet — Deep Visual Technical Guide